The Illinois State Police (ISP) is looking to improve road safety for drivers and law enforcement with a new emergency alert system that would provide more crash notifications via popular traffic apps.

A group of House Democrats reintroduced legislation late last week that aims to limit the ability of law enforcement agencies to use facial recognition technologies.

The White House Office of Science and Technology Policy (OSTP) is requesting public input on how to improve the collection, use, and transparency of criminal justice data at the state, local, Tribal, and territorial level (SLTT).

Technologies such as artificial intelligence (AI), facial recognition, and drones are poised to improve law enforcement by making police more productive and effective, but their deployment also needs to be accompanied by new thinking possible downsides including bias and cybersecurity, a Jan. 9 report from the Information Technology and Innovation Foundation (ITIF) says.

Law enforcement agencies that use forensic algorithms to aid in criminal investigations face numerous challenges, according to the Government Accountability Office (GAO), including difficulty interpreting and communicating results, as well as addressing potential bias or misuse.

California legislators on Sept. 12 passed a bill that would ban facial recognition technologies in state and local law enforcement body cameras for three years.

About five years ago, many law enforcement officials wondered if the cloud was safe enough to hold their data. Now the FBI, the nation’s top law enforcement agency, is considering a move to a large-scale, commercial software cloud provider.

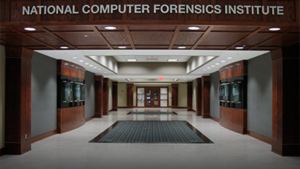

A bill formally authorizing the National Computer Forensics Institute within the Department of Homeland Security to train state, local, and tribal law enforcement on how to deal with and prosecute cyber crime passed the U.S. House on May 16.